[ccfic]

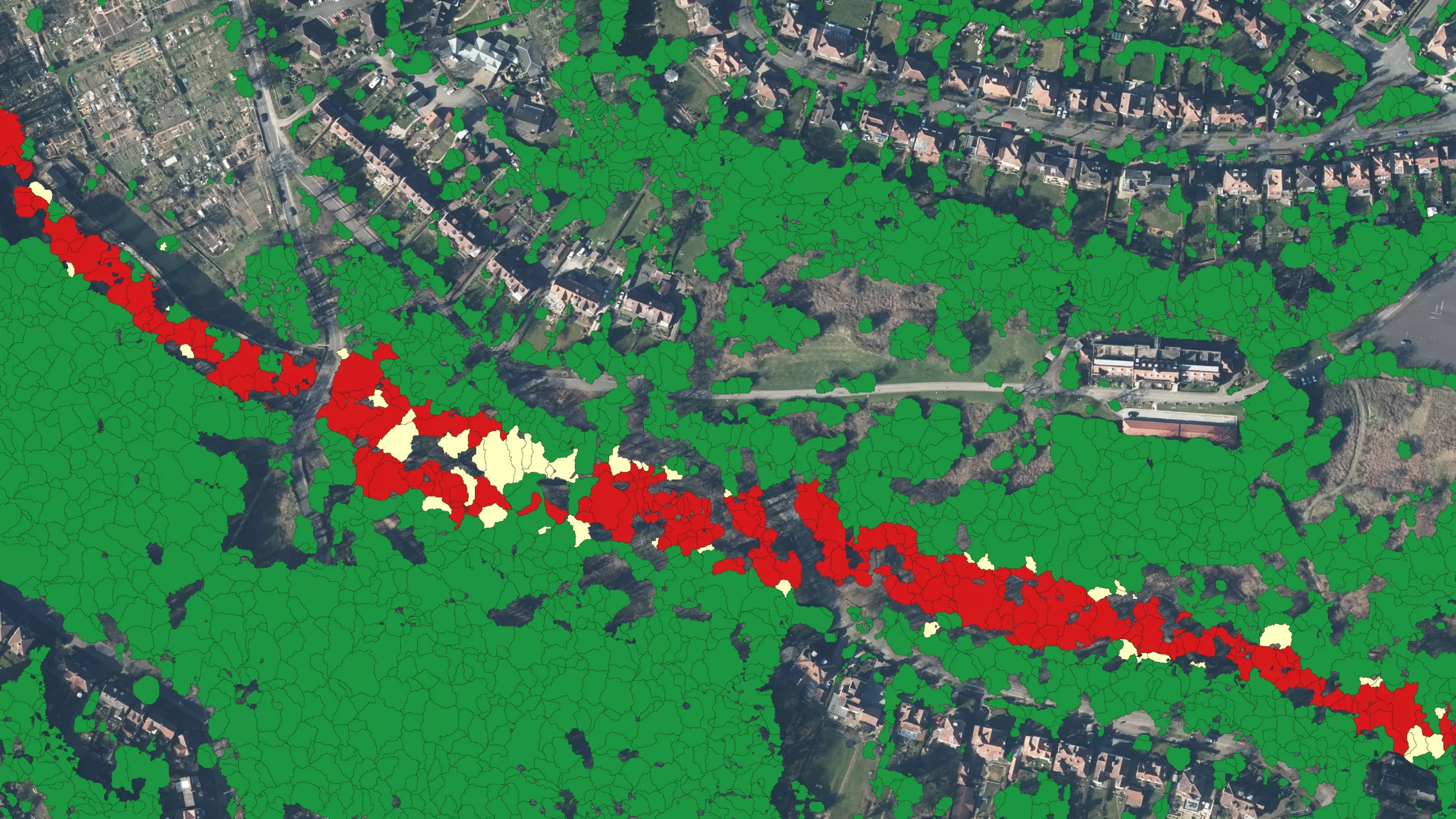

The Peak District National Park Authority, in partnership with Cranfield University and The Alan Turing Institute, is pioneering the use of Artificial Intelligence (AI) to automate the production of highly detailed land cover maps.

Using high-resolution aerial photography from Bluesky International, the Authority has developed a new variant of deep-learning network that automatically classifies the landscape into different land cover classes. This methodology allowed for the successful creation of a land cover map for the National Park, in more detail and with higher resolution than ever before, in just a fraction of the time it would take to undertake manually.

Land cover maps are essential tools for measuring and monitoring the state of natural landscapes. Their application to landscape ecology, climate change mitigation, and conservation work is largely determined by their resolution and accuracy. However, the last detailed land cover census for National Parks was carried out over 30 years ago by the Countryside Commission in 1991, using aerial photography from the early 70s and late 80s which was interpreted, by hand, meaning it took a team of researchers nearly 4 years to map all UK National Parks.

“Repeating such a survey is not practical or affordable, so we set out to develop an easily repeatable, accurate and cost-effective way of monitoring land cover across our dynamic and varied landscapes,” commented David Alexander, Research and Data Analyst at the Peak District National Park Authority. “We partnered with Cranfield University and The Alan Turing Institute to draw on current AI research using visible colour, aerial imagery from Bluesky.”

Land cover was classified from the Bluesky photography using Convolutional Neural Networks, a deep-learning AI method that excels in labelling natural photography. “Using this methodology, broad, high-level classes such as moorland, woodland or grasslands, were predicted with 95 per cent accuracy, equivalent to human error rates. Then, in a second pass of the networks, low-level, detailed classes such as heather moor or deciduous woodland, were predicted with 72 to 92 per cent accuracy” added Thijs van der Plas, who worked with the Peak District via The Alan Turing Institute. “The AI predictions were merged with topographic maps from Ordnance Survey to create a complete land cover map of the Peak District.”

Dr Daniel Simms, Senior Lecturer in Remote Sensing at Cranfield University, said, “AI, in combination with earth observation information such as the Bluesky aerial photography provides significant advantages over direct measurement and other digital methods, such as ground-based photography. Predictions are fast, as no field visits are required for data collection, and continuous over the landscape compared to time-consuming and resource-intensive surveys of individual habitats, or sensor networks at fixed locations. The methods developed for this project can have a much wider applications in monitoring biodiversity across the UK Protected Landscapes and inform and deliver on the government’s pledge to protect 30% of the UK’s land and sea by 2030”.

Ralph Coleman, Chief Commercial Officer at Bluesky, said, “By making our nationwide cover of the highest resolution, most up-to-date aerial data available to projects such as these through the APGB contract means we are helping to drive innovation across a number of sectors. This pioneering use of AI for landscape monitoring clearly achieves efficiencies and savings which can be applied to tackling issues such loss of biodiversity and habitat fragmentation and demonstrates a working model that can be applied to other scenarios.”

Bluesky is the lead consortium member of the Geospatial Commission’s APGB contract. The contract gives local authorities, emergency services, environmental bodies and central government departments, such as the Peak District National Park Authority, access to, free at point of use, high quality aerial data. Other data available from Bluesky under this contract includes 3D height models and Colour Infrared imagery.

The link to the report can be found here: https://www.mdpi.com/2072-4292/15/22/5277